Model Context Protocol: Why MCP Changes Everything

MCP lets AI agents use tools, read files, and interact with real systems. I've been building with it since day one — here's what most people miss.

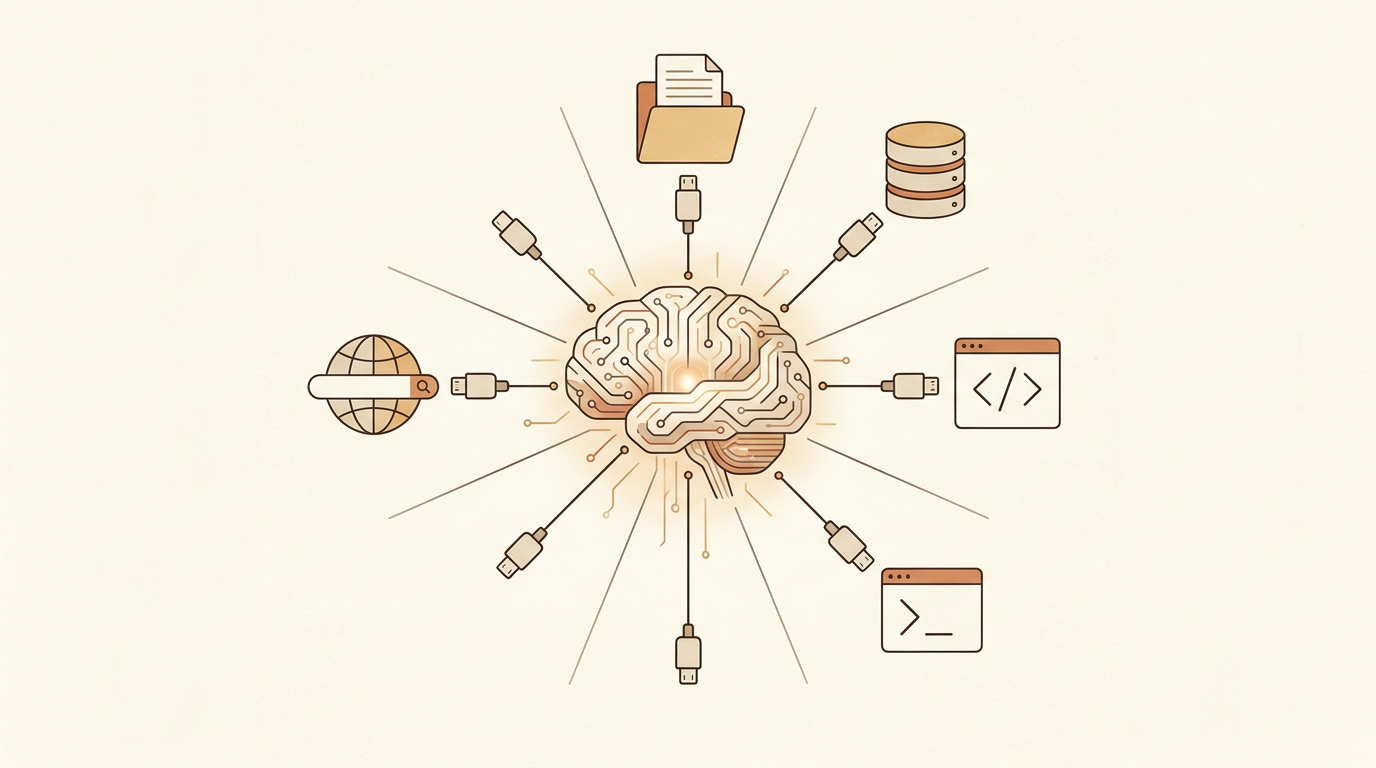

Model Context Protocol isn't just another API wrapper. It's the first serious attempt at giving AI agents a standardized way to interact with the real world — file systems, databases, APIs, browser automation. If you're building with AI agents in 2026, MCP is the infrastructure layer you can't ignore.

The path to MCP was paved by a decade of increasingly sophisticated API integrations. We went from REST APIs to GraphQL to function calling in LLMs. Each step gave AI models more structured ways to interact with external systems. But they all shared a fundamental limitation: the model had to know about every tool in advance through its system prompt or training data. MCP flips this — tools register themselves with standardized descriptions, and any MCP-compatible model can discover and use them dynamically. This is the difference between hardcoding a phone book and having a universal directory service.

“MCP lets AI agents use tools, read files, and interact with real systems. I've been building with it since day one — here's what most people miss.”

The core insight is simple: LLMs are powerful reasoners but terrible at doing things. They can write perfect SQL but can't execute it. They can design an API but can't deploy it. MCP bridges that gap by giving models access to tools through a standardized protocol.

The MCP ecosystem currently spans several tool categories that cover most development workflows. File system servers provide read and write access to local codebases. Shell servers execute terminal commands with output capture. Browser automation servers control headless or visible browsers for testing and scraping. Database servers query PostgreSQL, MySQL, or SQLite directly. Specialized servers connect to APIs like GitHub, Slack, Linear, and Figma. The standardized protocol means I can swap out any server implementation without changing how the model interacts with it — a Firestore MCP server and a PostgreSQL MCP server expose the same interface patterns.

In my workflow, MCP servers provide Claude with direct access to my codebase, terminal, and browser. When I ask Claude to 'fix the bug in the authentication flow,' it can read the relevant files, understand the context, make the fix, run tests, and verify the result — all without me copy-pasting code back and forth.

Debugging with MCP-enabled agents has fundamentally changed my troubleshooting workflow. Instead of manually reading stack traces, checking logs, and testing hypotheses, I describe the problem and let the agent investigate autonomously. A typical debugging session looks like this: the agent reads the error log, identifies the failing function, reads the source code, traces the data flow to find the root cause, writes a fix, runs the relevant tests, and reports back with a summary. What used to take me 30 minutes of context-switching between files and terminals now takes under 5 minutes of agent autonomy.

The key architectural decision in MCP is the separation between the model (the thinker) and the tools (the doers). This means any tool that implements the MCP interface works with any MCP-compatible model. It's the USB of AI development — a universal connector.

Building custom MCP servers is where the protocol's extensibility becomes tangible. I've built three custom servers: one that wraps our internal deployment pipeline, one that queries our analytics database with pre-approved query templates, and one that manages feature flags across environments. Each server took less than a day to build because the MCP SDK handles the protocol layer — transport, message framing, capability negotiation. The developer only needs to implement the tool logic itself. This low barrier to entry is crucial for adoption: if building an MCP server required weeks of infrastructure work, only large teams would bother.

Where MCP gets really powerful is chaining. An agent can read a GitHub issue, check out the relevant branch, understand the codebase, write a fix, run tests, and create a pull request — all in one autonomous loop. This isn't theoretical; I use this workflow daily.

MCP transforms team collaboration in ways that go beyond individual productivity. When every developer on a team has MCP-connected agents with access to the same tools, knowledge becomes less siloed. A junior developer's agent can navigate the codebase, understand deployment procedures, and follow established patterns as effectively as a senior developer's agent. The institutional knowledge that traditionally lived in senior engineers' heads gets encoded in MCP server configurations and tool descriptions that any agent can access.

The limitation is trust. MCP gives AI agents real capabilities, which means real consequences. A poorly prompted agent with MCP access can delete files, push bad code, or make unauthorized API calls. The protocol includes safety mechanisms, but the responsibility ultimately falls on the developer directing the agent.

The MCP ecosystem is evolving rapidly, and the next wave of development will likely focus on multi-agent coordination and persistent tool state. Imagine agents that can hand off tasks to each other through MCP — one agent monitors production logs, detects an anomaly, and dispatches another agent to investigate and fix the issue, all without human intervention. The technical foundations for this exist today in the protocol specification, but the trust and safety frameworks needed to deploy it responsibly are still being developed. The developers building those frameworks — defining permission boundaries, audit trails, and rollback mechanisms — are doing the most important work in the AI tooling space right now.

Model Context Protocol isn't just another API wrapper. It's the first serious attempt at giving AI agents a standardized way to interact with the real world — file systems, databases, APIs, browser automation. If you're building with AI agents in 2026, MCP is the infrastructure layer you can't ignore.

The path to MCP was paved by a decade of increasingly sophisticated API integrations. We went from REST APIs to GraphQL to function calling in LLMs. Each step gave AI models more structured ways to interact with external systems. But they all shared a fundamental limitation: the model had to know about every tool in advance through its system prompt or training data. MCP flips this — tools register themselves with standardized descriptions, and any MCP-compatible model can discover and use them dynamically. This is the difference between hardcoding a phone book and having a universal directory service.

The core insight is simple: LLMs are powerful reasoners but terrible at doing things. They can write perfect SQL but can't execute it. They can design

...

Tags: MCP, AI, Claude, Developer Tools

See Also:

→ The Five-Word Quiz That Fills an Empty Deck on Day One→ AI Agents Are Replacing the Traditional Software Development Lifecycle→ Building a Multi-Tenant Marketplace from Scratch→ PostgreSQL vs Firestore: A Practical Decision Framework→ How GenAI Reduced Our Operational Overhead by 90%Browse all articles →Key Facts

- • Category: Dev

- • Reading time: 14 min read

- • Technology: MCP

- • Technology: AI

- • Technology: Claude