From Camera to Flashcards: Building AI Smart Scan

How I turned a phone camera into a vocabulary extraction engine using Gemini's vision API and Cloud Functions.

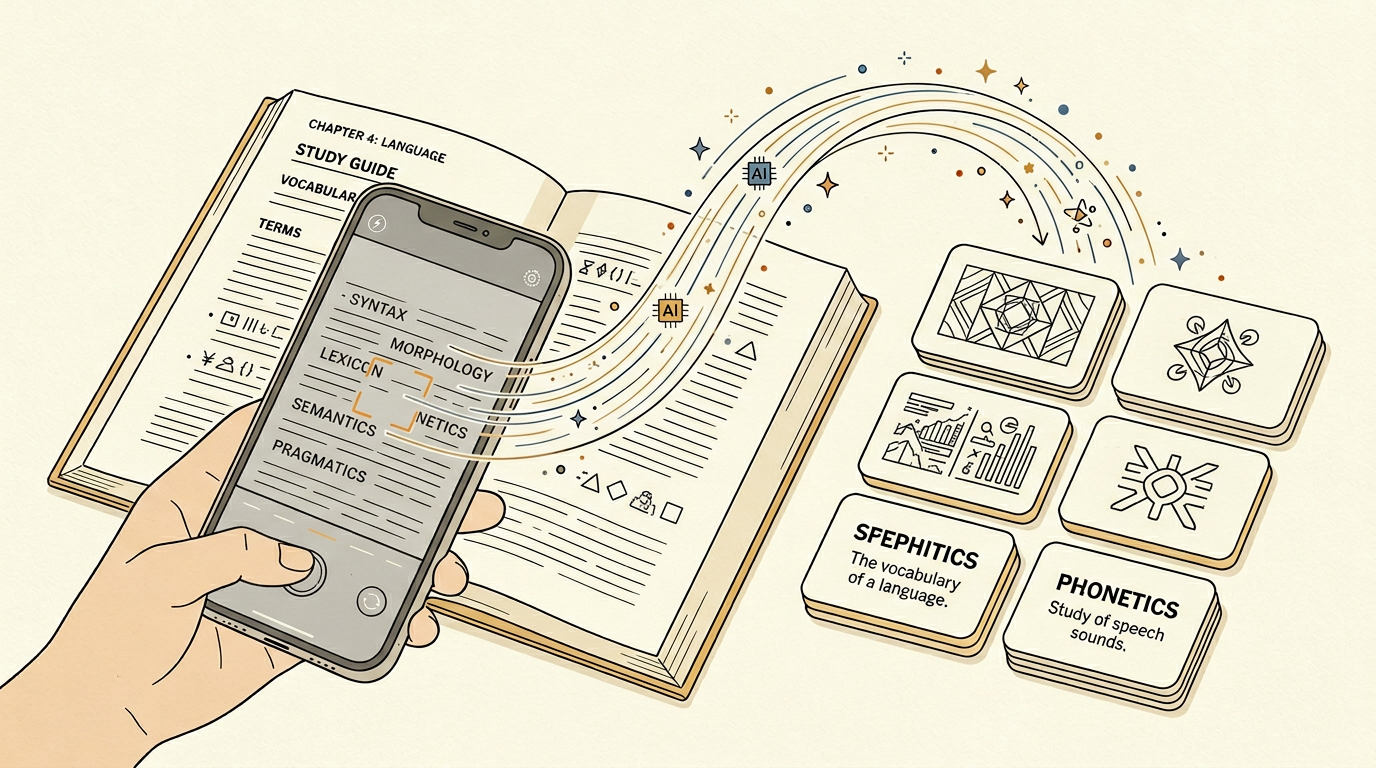

The idea was simple: point your phone camera at a textbook page, and the app automatically extracts vocabulary words and creates flashcards. The implementation was anything but simple — it involved camera capture, image preprocessing, Gemini's vision API, structured output parsing, and graceful error handling for blurry photos.

Multi-language support added significant complexity to the extraction pipeline. The system needed to handle English, Korean, Japanese, and Chinese text — often mixed on the same page. Language detection runs as a preprocessing step, analyzing character distributions to determine the primary language and any secondary languages present. Gemini's multilingual capabilities handled the actual extraction, but prompt templates had to be language-specific to produce natural-sounding definitions and properly handle linguistic nuances like Korean honorifics or Japanese kanji readings.

“How I turned a phone camera into a vocabulary extraction engine using Gemini's vision API and Cloud Functions.”

The camera integration in Flutter uses platform channels to access native camera APIs. We needed high-resolution captures with auto-focus confirmation — a blurry image produces garbage vocabulary. The native layer signals when the camera has locked focus, and only then does the capture proceed.

Low-light photography was a persistent user complaint in early versions. Students often study in dimly lit environments, and standard camera captures produced noisy, low-contrast images that degraded OCR accuracy. We implemented adaptive exposure compensation that detects ambient light conditions and adjusts capture parameters accordingly. For extremely low-light scenarios, the app activates a multi-frame capture mode that takes three rapid exposures and computationally merges them for improved signal-to-noise ratio — similar to night mode in modern smartphone cameras.

Image preprocessing happens client-side to reduce API costs. We resize to a maximum of 2048px on the longest edge, convert to JPEG at 85% quality, and apply contrast enhancement for photographed text. This reduces the payload from 8MB to under 500KB while maintaining text legibility.

Page boundary detection ensures only the relevant content area is processed. Users rarely photograph a perfectly aligned, full-frame textbook page — there's usually desk surface, fingers, or adjacent pages visible. We implemented a lightweight edge detection algorithm running on-device that identifies the page boundaries, applies perspective correction for angled shots, and crops to the content area before sending to the API. This preprocessing step improved extraction accuracy by roughly 15% and reduced API costs by eliminating irrelevant image regions.

Gemini's vision API receives the processed image with a structured prompt: identify all vocabulary words visible in the image, provide definitions, and categorize by difficulty. The response comes back as JSON, which we parse into flashcard objects. The prompt engineering was iterative — early versions confused headers with vocabulary, or generated definitions that were too vague.

Structured output validation was critical for reliability. Gemini's JSON responses occasionally contained malformed entries — definitions that were actually translations, difficulty ratings that were inconsistent, or duplicate vocabulary entries. We built a validation layer that checks each extracted card against a set of heuristics: definition length must be between 10 and 200 characters, difficulty must map to our predefined scale, and no two cards in a batch can have identical terms. Cards that fail validation are flagged for regeneration with a more specific prompt.

Rate limiting was essential. Each scan costs API credits, and an enthusiastic user could burn through the monthly budget in an afternoon. We implemented a daily scan quota with a premium tier that increases the limit, plus a client-side cooldown that prevents rapid successive scans.

Caching and deduplication prevented redundant API calls and duplicate flashcards. When a user scans a page, we generate a perceptual hash of the processed image and check it against previously scanned images. If the hash matches within a similarity threshold, we return the cached results instead of making another API call. This also extends to vocabulary-level deduplication — if a scanned word already exists in the user's deck, the system highlights it as a review opportunity rather than creating a duplicate card.

The feature that surprised us most was the accuracy on handwritten notes. Users started scanning their own handwritten vocabulary lists, and Gemini's vision model handled handwriting recognition remarkably well — about 92% accuracy on reasonably legible handwriting. We hadn't designed for this use case, but it became one of the most-used features.

Batch scanning mode emerged from observing how power users interacted with the feature. Students studying for exams wanted to scan entire textbook chapters — 20 or 30 pages in sequence. The batch mode queues multiple captures, processes them sequentially through the pipeline, and presents a unified review screen showing all extracted vocabulary organized by page. Users can select which cards to add to their deck, merge duplicates across pages, and organize results into study sets — all before any cards are committed to their collection.

The idea was simple: point your phone camera at a textbook page, and the app automatically extracts vocabulary words and creates flashcards. The implementation was anything but simple — it involved camera capture, image preprocessing, Gemini's vision API, structured output parsing, and graceful error handling for blurry photos.

Multi-language support added significant complexity to the extraction pipeline. The system needed to handle English, Korean, Japanese, and Chinese text — often mixed on the same page. Language detection runs as a preprocessing step, analyzing character distributions to determine the primary language and any secondary languages present. Gemini's multilingual capabilities handled the actual extraction, but prompt templates had to be language-specific to produce natural-sounding definitions and properly handle linguistic nuances like Korean honorifics or Japanese kanji readings.

The camera integration in Flutter uses platform channels to access native camera APIs. We needed high-resolution captures with auto-focus confirmation — a blurry image produces garbage vocabulary. The native layer

...

Tags: AI, Gemini, Computer Vision, Flutter

See Also:

→ The Five-Word Quiz That Fills an Empty Deck on Day One→ AI Agents Are Replacing the Traditional Software Development Lifecycle→ Building a Multi-Tenant Marketplace from Scratch→ PostgreSQL vs Firestore: A Practical Decision Framework→ How GenAI Reduced Our Operational Overhead by 90%Browse all articles →Key Facts

- • Category: Dev

- • Reading time: 12 min read

- • Technology: AI

- • Technology: Gemini

- • Technology: Computer Vision